After the 2020 Labor Day Fires, we realized that no comprehensive needs and impact assessments were possible because no complete list of the humans affected by the fire existed in the community. Community leaders and emergency management professionals must ensure that in the future a People-Centered Disaster Registry is in place to support equitable, efficient response and recovery efforts.

Definitions:

- People-centered – as opposed to tax lot focused data as in 2020. Structure damage assessment is crucial, but impacts on the social determinants of health of individual people must be addressed as well. Housing first, yes, but disaster recovery for humans requires long-term support.

- Disaster – many disasters are local, not nationally designated. Local leaders must be empowered to respond to smaller disasters, especially when national and state resources are scarce.

- Registry – not a spreadsheet with people’s names, but a rich set of data collected and updated over time that is tied to the provision of resources required for a full recovery.

Why quality people-centered data matters

- Meet Needs – Local community organizations need to find and communicate with survivors so that they can provide immediate disaster response and ongoing recovery services.

- Fund Recovery – Funding for relief and recovery efforts requires proof of need. Without quality data on who is in need, communities are ill equipped to advocate for resources.

- Efficiency – Standardized, verified data is required to tailor programs to meet actual need as well as to eliminate fraud, waste, and abuse of recovery resources.

- Fill Gaps – Quality longitudinal data about current needs is required for tracking recovery progress and equity over time at the individual and community levels. Longitudinal and demographic data allows communities to identify demographic and geographic inequities and to perform analysis and lessons learned work post disaster.

Read more about our learnings from the 2020 Labor Day Fires in this white paper published by SOU

Three People Who Really Need Quality Data

Citizens

- Plan for your household’s (or business’) safety and self sufficiency by collecting the required data and papers and proof of ownership.

- Prepare for future recovery by purchasing adequate insurance if you can afford it, pack go bags and online records, learn response and recovery skills.

- Participate in local efforts with local trusted organizations, get CERT and other skills training, get involved in the community to build trusting relationships before you and your community need them.

Local Community Leaders

- Contribute to your community’s disaster registry and MOU design with insights from your own data needs and experience working with survivors.

- Plan to participate in community data collection in a disaster.

- Actively support your community’s preparedness efforts to get a complete, verified set of data quickly after any future disaster via your COAD or other lead organization.

- Align on the importance and practices of data quality and how your team can model organizational virtue (daily actions aligned with values).

- Advocate for seamless community solution based on shared systems – trust, insight, flow in and around the CIE.

Disaster Response Leaders

- Trust is the foundation stone – collaborate with citizens and trusted community partners to design a solution with security and transparency, support trusted organizations in community before, during, and after the disaster via RVCOG, COAD and LTRG and [hold yourself accountable [link] for meeting the commitments you’ve made.

- Insights from complete data – use data to swiftly drive resources and eligibility adjustments, collect longitudinal data to track recovery over time, update and refine data iteratively.

- Flow – intake data on people affected from partner orgs, ideally via the CIE, distribute insights broadly to everyone who can benefit from them, make deidentified data available to analysts from partner orgs, and hand off data seamlessly into the community’s CIE as people leave the DCMP.

What Makes Data High Quality?

The Institute of Electrical and Electronics Engineers defines high quality data by its:

- Completeness: The extent to which data are of sufficient breadth, depth and scope for the task at hand.

- Accuracy: The extent to which data are correct, reliable and certified.

- Timeliness: The extent to which the age of the data is appropriate for the task at hand.

- Consistency: The extent to which data are presented in the same format and compatible with previous data.

- Accessibility: The extent to which information is available, or easily and quickly retrievable.

- Source: IEEE

High quality data builds trust, offers insights, and allows confident sharing to those who can use the data to help disaster survivors.

We adopted a model of effective data management from an article, The New Rules of Data Privacy in the Harvard Business Review, which states that to be effective data usage must emphasize:

- Trust over transactions

- Insight over identity

- Flows over silos

Digital Disaster After Natural Disaster

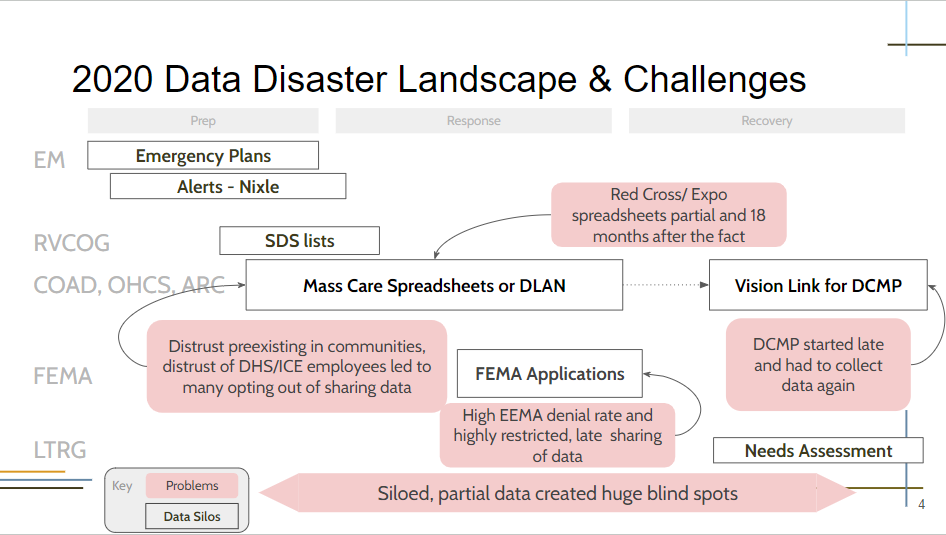

Here’s an image of the key data issues we had after the 2020 Labor Day fires in Southern Oregon.

Source slide (reuse of our slides is encouraged, rules here)

After the 2020 Labor Day fires, we had a data disaster because we lacked:

Trust

- Trustworthy, complete data requires we earn the trust of the community, especially with elders, speakers of other languages, the houseless, and those with substance and mental health challenges

- Survivors were expected to share the same data over and over, usually with no tangible benefit

- Broken implicit promise of resources in exchange for completing data surveys

- No orgs they trusted with their data who also directed resources. FEMA and US DHS combine agencies of control and care

Insight

- No overview of losses and needs – only a kaleidoscope of siloed, incomplete, inconsistent demographic data which made identifying and advocating for needs more difficult

- No longitudinal (over time) data

- No way to identify gaps in services – how equitably our resources were distributed

- No way for CBOs to use data to direct and show the impact of their services

- No comprehensive picture of need to use to advocate for resources

Flow

- No or overly restrictive sharing agreements, so the data was by definition incomplete and siloed

- 18 months later, very incomplete, siloed, and messy data was shared

- People who got the data were not allowed to use it in the community

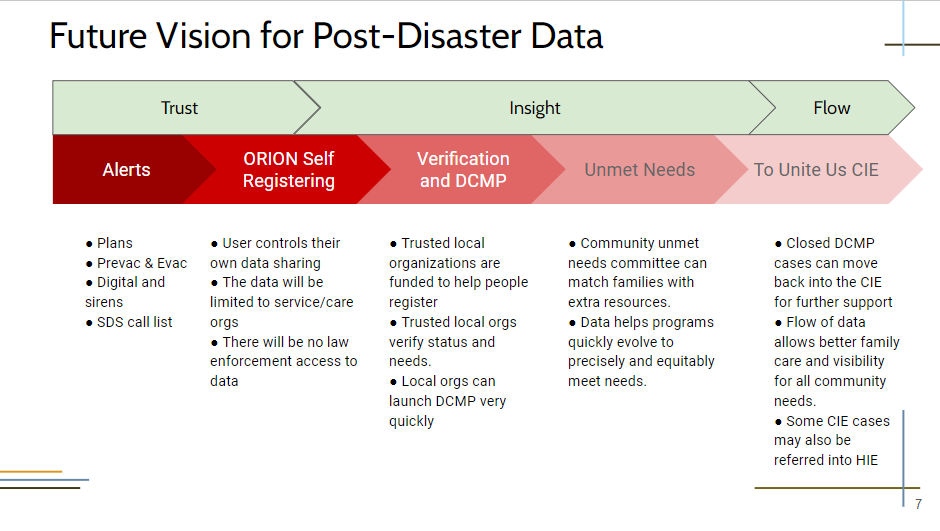

Our Vision of the Post-disaster Digital Future

In the wake of a disaster an affected person easily finds a trusted community member who collects key data that allows the community to:

- Register and verify everyone who is impacted by the disaster

- Communicate with survivors about available resources and needs

- Track the recovery over time so everyone in our community who needs help is counted and cared for equitably

- Advocate for disaster-specific funding directly linked to need

- Learning leaders use data and analysis to improve preparation, response, and recovery after any future disasters.

Our vision for what data collection, use, and flow should look like going forward:

We have realized that disaster response should be seen as a temporary setback in the community’s efforts to address the social determinants of health of all our neighbors.

Therefore, we imagine a community care technology landscape that integrates the disaster response data into existing Community and Health Information Exchange systems so people recover and continue to get the support they need at every step of their path towards a full, healthy life.

Suggested Actions

To begin stepping toward this vision of data Trust, Insight and Flow, we offer the following suggestions:

- Empower and visibly serve data subjects – let subjects enter, update, and control their own data with verification loops built into the process. Link resources directly to the data, as in, when you offer us this data, you will be in the queue for a DCM

- Standardize and centralize data collection – reduce retraumatization, duplication of effort, and add equity by tracking basic demographic data on survivors

- Partner with local organizations to ensure a complete list is collected – organizations with 24/7 local presence have the trust required to bring everyone affected into the list. True partnership requires a two way flow of commitments, resources, and honest feedback.

- Update data longitudinally – to track and support people’s recovery and build valuable data on how recovery actually happens, and not

- Share anonymized data and analytics with partner organizations so they can tell their own stories using the data.

- Flow subject data to community care organizations – via the CIE to allow health and human services organizations to continue supporting survivors post disaster.

Who Is Behind This Project?

In the process of our work so far, we interviewed over 30 leaders active in the response to Oregon’s 2020 Labor Day Fires. The content you see here is our compilation of the best thinking of a variety of leaders in our community. Any credit should go them and any suggestions for improvements should come to us.

The identification of the data disaster came out of the work of the JCCLTRG’s needs assessment work led by Barry Braden, Maya Jarred, Ellie Holty, Caryn Wheeler-Clay, and Echo Fields.

This project has been led, so far, by Stephen Bárczay Sloan, of the Humane Leadership Institute and Local Innovation Works. He raised his family in the Rogue Valley and has had a career spanning software development and operations leadership from small businesses to global IT firms. After the 2020 fires, Stephen dove into community recovery working on the Zone Captains program, the Local Innovation Lab, needs assessment, Reimage and Rebuild Rogue Valley (rthreev.org), and support for JCC LTRG leadership.

Crucial, timely support has been provided by Southern Oregon University’s Institute for Applied Sustainability with special assistance from Professor Bret Anderson.

Local Innovation Lab interns Katherine Hardeberg, Kendra Lellis and Mimi Pieper.

Please reach out if you have any questions about how this might be useful to your organization or agency.

Learn more about the early vision of the project on the DLAD Genesis page.

Our Values Around Data Work

- Best Practices – build upon the best thinking of others, in this case the European Union’s General Data Protection Regulation (GDPR) based on these principles or recitals. Including:

- “The protection of natural persons in relation to the processing of personal data is a fundamental right.” recital 1

- “The processing of personal data should be designed to serve mankind.” recital 4

- And is subject to each person’s right to be forgotten – recital 66

- Trust – must be earned through daily efforts towards clarity and care for natural humans. More on building community trust here.

- Effectiveness – data allows us to focus resources and effort to make the most difference most quickly. It also allows us to advocate more accurately for the amount and type of resources needed

- Equity – having a complete understanding of who was impacted allows for fair allocation of resources to those in most need

- Efficiency – we work with one source of valid data to save impacted people time and help recovery organizations coordinate their efforts

How you can help

- Use the ideas, images, and suggestions we offer here to refine your personal, organizational, and community efforts to avoid future data disasters

- Share this webpage with anyone you think might be interested via your email newsletter or social media channels

Reach out with any questions or suggestions you have.